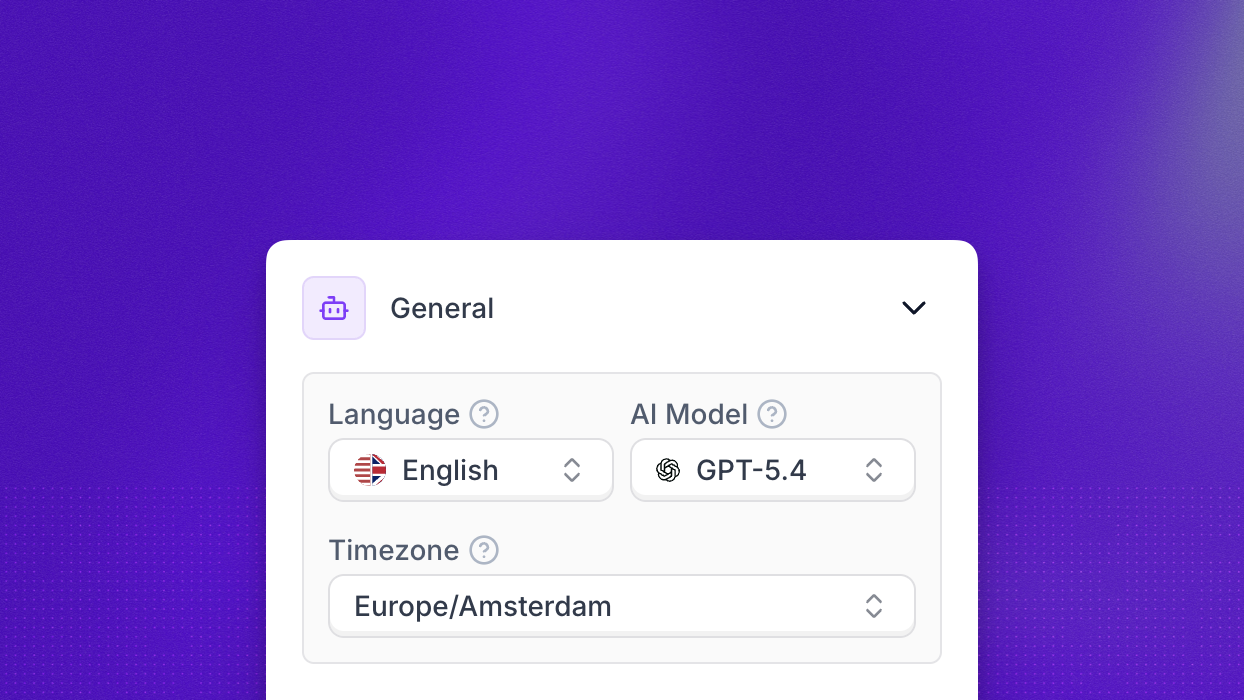

The General section of the agent editor contains the foundational settings that apply to every conversation your agent handles. This is where you choose the language your agent speaks, the AI model powering its responses, and the timezone it uses for date and time references.

The Language dropdown determines which language your agent uses for both speech recognition and voice synthesis. Changing the language automatically adjusts the underlying transcription engine and voice options to match.

The AI Model selector controls which large language model powers your agent’s responses. Available options include:

Pick the model that matches your latency, cost, and quality requirements.

The Timezone dropdown sets the local time context your agent operates in. New agents inherit the default from Settings > Preferences, but you can override it here. Keeping this accurate is critical for call openings, follow-up promises, and calendar booking contexts. The full list of supported values is available on the Timezones reference page.

For lower-level controls over transcription, latency, interruptions, and vocabulary, see Additional settings.